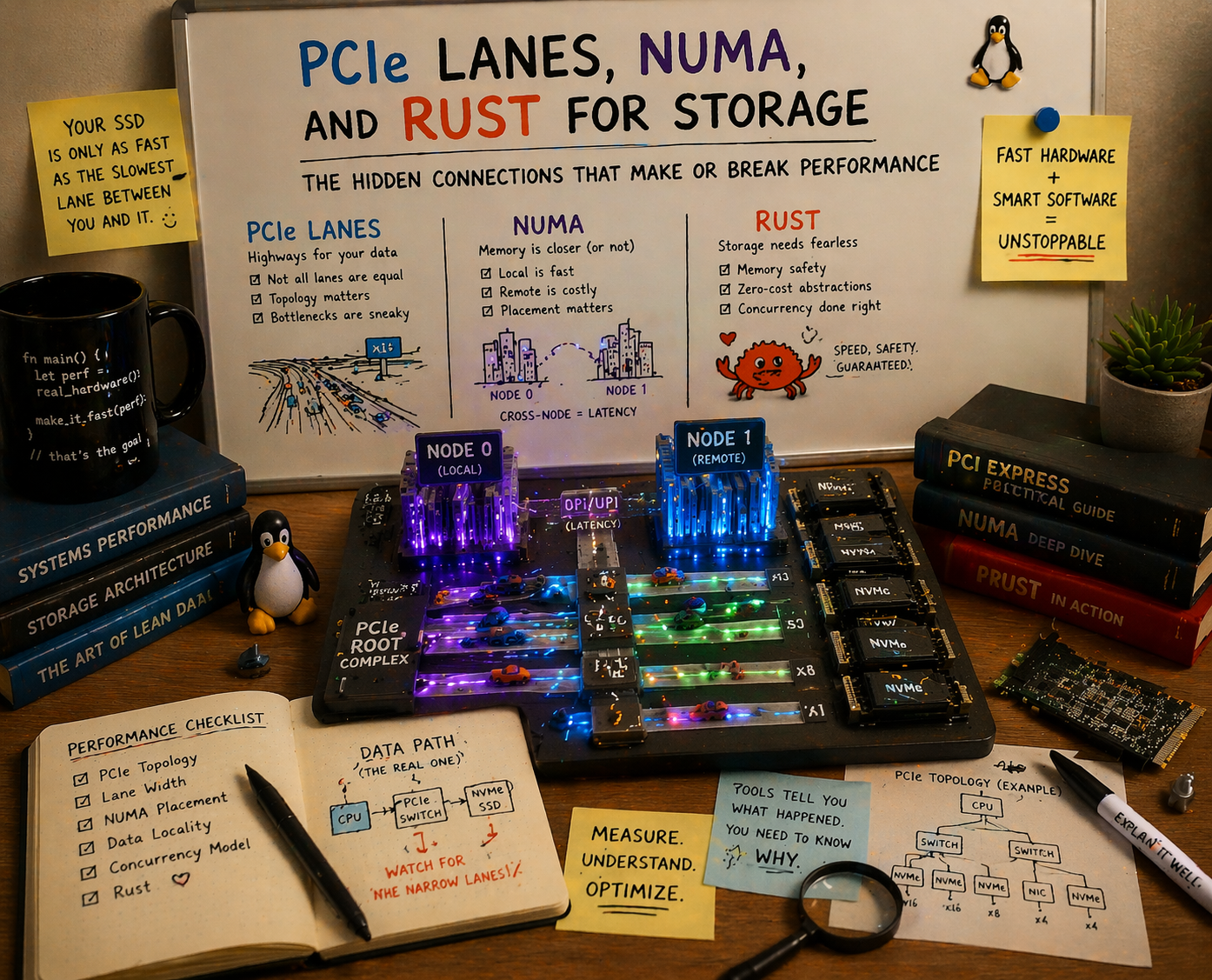

The NVMe Density Problem: PCIe Lanes, NUMA, and Language Choice

As NVMe counts per chassis climb past 24, 32, and toward 48 drives, the bottleneck shifts from disk speed to PCIe topology. The solution is dual-socket CPUs with massive lane counts, but only if your storage software can actually exploit them without a NUMA penalty. Here’s why Go can’t, and Rust can.

The Lane Crisis: More NVMe Drives Than Your CPU Can Feed

The economics of flash storage are pushing NVMe density to levels that would have been absurd five years ago. With EDSFF E1.S form factors, a 1U server can pack 24 to 32 NVMe drives. In 2U, that number climbs to 36-48 drives. Enterprise SSDs are shipping at 30 TB and 60 TB capacities, meaning a single 2U chassis can hold over a petabyte of raw flash.

But there’s a physics problem. Each NVMe drive requires a PCIe x4 link, four lanes of dedicated bandwidth. The math is brutal:

| Drive Count | PCIe Lanes (NVMe only) | + 2x 100GbE NIC | + Management | Total Needed |

|---|---|---|---|---|

| 24 drives | 96 | +32 | +4 | 132 |

| 32 drives | 128 | +32 | +4 | 164 |

| 48 drives | 192 | +32 | +4 | 228 |

No single-socket CPU on the market today provides 132+ PCIe lanes. The gap between what NVMe-dense chassis demand and what one CPU socket can supply forces one of two compromises:

- PCIe switches to fan out limited CPU lanes to more devices

- Dual-socket CPUs to double the lane count with a second processor

Both have costs. But one of those costs is invisible and language-dependent, and that’s where this story gets interesting.

Option 1: PCIe Switches, the Hidden Bottleneck

When a server has more NVMe drives than available CPU PCIe lanes, the standard industry solution is a PCIe switch (Broadcom’s PEX series). A switch takes a x16 uplink from the CPU and fans it out to, say, 4x NVMe drives at x4 each. On paper, the total downstream bandwidth matches the uplink. In practice, it doesn’t.

The Latency Tax

Every PCIe switch hop adds approximately 700 nanoseconds of latency to every I/O transaction. For a single 4 KB random read from a modern NVMe SSD (which completes in ~10 microseconds), that’s a 7% latency penalty per hop. Stack two switches (common in dense JBOF configurations) and you’re at 14%, before you’ve done anything in software.

The Bandwidth Bottleneck

A PCIe switch doesn’t create bandwidth; it shares an uplink. Four NVMe SSDs behind a x16 PCIe 5.0 switch share 64 GB/s of uplink bandwidth. Each drive can individually sustain 14 GB/s of sequential reads. If all four drives are active simultaneously:

- Aggregate demand: 4 x 14 = 56 GB/s

- Uplink capacity: 64 GB/s

- Headroom: 14%. Enough for sequential, but under mixed random I/O with metadata overhead, this oversubscription becomes real contention

Research from USENIX NSDI 2024 on routable PCIe fabrics measured up to 30% bandwidth degradation when crossing PCIe switch boundaries under realistic workloads, with host-to-device throughput dropping from theoretical maximums to 8.4 GB/s in some configurations.

The Cost

PCIe switches aren’t free. A Broadcom PEX88096 (96-lane PCIe 4.0 switch) adds $200-400 per chip, consumes 15-25W of power, and occupies board real estate. In a 48-drive chassis, you might need 4-6 switches, adding $1,000-2,000 and 60-150W to the BOM, a meaningful fraction of the server’s total cost and thermal budget.

The takeaway: PCIe switches are a necessary evil when CPUs don’t provide enough lanes, but they introduce latency, bandwidth contention, cost, and power overhead that directly degrades storage performance.

Option 2: Dual-Socket, More Lanes, More Problems

The alternative is to use two CPUs, each with its own PCIe root complex, collectively providing enough lanes for direct-attach NVMe without switches. This is where Intel’s Xeon 6 Granite Rapids architecture becomes compelling.

Intel Xeon 6760P: The Lane Count King

The Intel Xeon 6760P (Granite Rapids) offers:

- 88 PCIe 5.0 lanes per socket

- 4 UPI links at 24 GT/s for inter-socket communication

- 64 P-cores at 2.2 GHz base / 3.8 GHz turbo

- 320 MB cache, 8 DDR5-6400 memory channels

- 330W TDP

In dual-socket configuration: 176 PCIe 5.0 lanes total. That’s enough for 32 NVMe drives at x4 each (128 lanes) plus two 100GbE NICs (32 lanes) plus management (4 lanes), 164 lanes used, 12 spare, all directly attached to CPU root complexes with zero PCIe switches.

For comparison:

| Platform | Lanes/Socket | Dual-Socket Total | Notes |

|---|---|---|---|

| Intel Xeon 6760P | 88 PCIe 5.0 | 176 | 4 UPI @ 24 GT/s |

| AMD EPYC 9654 (Genoa) | 128 PCIe 5.0 | 160* | Infinity Fabric consumes lanes |

| AMD EPYC 9654P (single) | 128 PCIe 5.0 | 128 | No NUMA, all lanes for I/O |

| AMD EPYC 9755 (Turin) | 128 PCIe 5.0 | 160* | Same IF tradeoff |

*AMD EPYC dual-socket allocates a portion of each CPU’s Infinity Fabric links to inter-socket communication, reducing usable PCIe lanes. The net gain from adding a second socket is only 32 lanes (160 - 128 = 32), not a full doubling.

Intel’s architecture is different: UPI links are separate from PCIe lanes. Adding a second Xeon 6760P gives you a full additional 88 PCIe lanes without sacrificing any from the first socket. This makes dual-socket Intel uniquely attractive for NVMe-dense configurations.

The NUMA Problem

But dual-socket introduces NUMA (Non-Uniform Memory Access). In a dual-socket system, each CPU has its own local memory and its own PCIe lanes. When a thread on Socket 0 accesses memory attached to Socket 1, it must traverse the inter-socket link (UPI for Intel, Infinity Fabric for AMD), incurring a penalty:

| Access Type | Typical Latency |

|---|---|

| Local memory (same socket) | ~90 ns |

| Remote memory (cross-socket) | ~120-180 ns |

| Penalty | 30-100% overhead |

For a storage system, this means: if a thread on Socket 0 processes an I/O request for an NVMe drive attached to Socket 1, every memory access involved in that I/O (reading the command buffer, copying data, computing checksums) pays the cross-socket tax. At 100,000+ IOPS per drive, this adds up to milliseconds of aggregate penalty per second per drive.

The solution is conceptually simple: pin I/O threads to the same socket as their drives. Socket 0’s threads handle Socket 0’s NVMe drives; Socket 1’s threads handle Socket 1’s. Memory allocations stay local. PCIe transactions stay local. The inter-socket link carries only coordination traffic, not data.

The question is: can your storage software actually do this?

Go’s NUMA Blindness: A Structural Problem

Go, the language behind several major object storage systems, has a fundamental problem with NUMA. It’s not a bug. It’s a design decision that permeates the runtime.

The Goroutine Scheduler Doesn’t Know About Sockets

Go’s runtime scheduler (the GMP model: Goroutines, M threads, P processors) was designed for throughput on uniform memory architectures. It has no concept of NUMA nodes, sockets, or memory locality.

Key behaviors that destroy NUMA performance:

1. Work stealing crosses socket boundaries freely.

When a P (logical processor) runs out of goroutines to execute, it steals work from other P’s, including P’s on the other NUMA node. A goroutine that was allocated its stack, its buffers, and its mcache on Socket 0 can be stolen and resume execution on Socket 1. Every subsequent memory access hits remote DRAM.

The NUMA-aware scheduler proposal by Dmitry Vyukov (2014) acknowledged this problem and designed a solution with per-node run queues and node-local work stealing preferences. It was never implemented. Over a decade later, Go’s scheduler remains NUMA-unaware.

2. Memory allocation is NUMA-oblivious.

Go’s memory allocator (based on TCMalloc) uses per-P mcaches backed by a global mheap. When a goroutine allocates memory, it comes from the OS page that happens to be available, which may be on either NUMA node. There is no mechanism to request node-local allocation, and no per-node memory pools.

The allocator is designed to be fast (lock-free per-P fast path), not local. In a dual-socket system:

- A goroutine on Socket 0 may allocate a 64 KB I/O buffer from Socket 1’s memory

- Every byte copied to/from that buffer pays the cross-socket penalty

- The GC (which scans heap objects) also traverses remote memory, adding to cross-socket traffic

3. The GC generates cross-socket traffic.

Go’s concurrent garbage collector uses multiple worker goroutines that scan the entire heap. GC workers on Socket 0 will scan objects physically located in Socket 1’s DRAM, generating sustained cross-socket memory traffic during every GC cycle. For a storage system under load, which allocates and frees millions of I/O buffers per second, GC cycles are frequent and cross-socket traffic is substantial.

4. runtime.LockOSThread() is a blunt hammer.

Go provides LockOSThread() to pin a goroutine to its current OS thread, and you can then use syscall to set CPU affinity on that thread. But this defeats Go’s scheduler entirely for that goroutine; it can’t be preempted, work-stolen, or multiplexed. Doing this at scale (pinning thousands of I/O-handling goroutines) turns Go’s concurrency model into an expensive wrapper around manual thread management.

The Result: Single-Socket AMD Became the Default

The practical consequence of Go’s NUMA blindness is that the Go storage ecosystem avoided dual-socket systems entirely. AMD’s EPYC P-series (single-socket SKUs) became the de facto choice:

- EPYC 9654P: 96 cores, 128 PCIe 5.0 lanes, single socket, no NUMA

- EPYC 9755P (Turin): 128 cores, 128 PCIe 5.0 lanes, single socket, no NUMA

With 128 lanes, you can direct-attach 24 NVMe drives (96 lanes) with room for networking (32 lanes). No NUMA means Go’s scheduler works fine; all memory is local, all PCIe transactions are local, work stealing has no penalty.

But 128 lanes is the ceiling. For 32+ drive configurations, you either add PCIe switches (with their latency and bandwidth penalties) or you leave performance on the table. Go’s language runtime limits your hardware architecture to single-socket, which in turn limits your NVMe density to what one socket can feed.

This is the invisible tax. No benchmark captures it because nobody benchmarks the configuration they can’t run. The comparison isn’t “Go on dual-socket vs. Go on single-socket” (where dual-socket would lose due to NUMA penalties). The comparison is “Go on single-socket with 24 NVMe drives” vs. “a NUMA-aware system on dual-socket with 48 NVMe drives.” The latter configuration simply doesn’t exist in the Go storage world.

Rust Eliminates the NUMA Tax

Rust gives you the tools to exploit dual-socket systems without paying the NUMA penalty. Not as an afterthought or a workaround, but as first-class capabilities that compose with the language’s ownership and concurrency model.

Thread-to-Core Pinning

Rust’s core_affinity crate and direct libc::sched_setaffinity calls let you pin threads to specific cores with zero overhead:

use core_affinity::CoreId;

// Pin current thread to core 0 (Socket 0)

core_affinity::set_for_current(CoreId { id: 0 });With tokio, you configure this at runtime initialization:

tokio::runtime::Builder::new_multi_thread()

.worker_threads(16)

.on_thread_start(|| {

// Pin each worker thread to cores on the local NUMA node

let core_id = determine_local_core();

core_affinity::set_for_current(core_id);

})

.build()You can run two separate tokio runtimes, one pinned to Socket 0’s cores, one pinned to Socket 1’s cores, each handling I/O for its local NVMe drives. No cross-socket migration, no remote memory access, no NUMA penalty.

NUMA-Aware Memory Allocation

Rust’s custom allocator support (via the Allocator trait and #[global_allocator]) lets you use NUMA-aware allocators like jemalloc with per-NUMA-node arenas, or wrap libnuma’s numa_alloc_onnode() directly:

// Allocate a buffer on NUMA node 0

let buf = numa_alloc_onnode(size, 0);Because Rust has no GC, once you allocate memory on a specific NUMA node, it stays there until you explicitly free it. No background process will scan it from the wrong socket. No compaction will move it. The allocation is deterministic and local for its entire lifetime.

No GC Crossing Socket Boundaries

This is Rust’s most significant NUMA advantage, and it requires zero code. Because there is no garbage collector:

- No GC worker threads scanning remote DRAM

- No stop-the-world pauses generating cross-socket traffic spikes

- No heap compaction moving objects between NUMA nodes

- No allocation pressure causing the runtime to grab memory from the wrong node

Memory is freed when it goes out of scope. Drop runs on the thread that owns the value, the thread you pinned to the local socket. The entire lifecycle is NUMA-local by construction.

The Dual-Socket Rust Architecture

Here’s what a NUMA-aware Rust storage node looks like on a dual Xeon 6760P system:

Socket 0 (88 PCIe lanes) Socket 1 (88 PCIe lanes)

├─ 16 NVMe drives (64 lanes) ├─ 16 NVMe drives (64 lanes)

├─ 1x 100GbE NIC (16 lanes) ├─ 1x 100GbE NIC (16 lanes)

├─ 8 remaining lanes (management) ├─ 8 remaining lanes (management)

│ │

├─ Tokio runtime A (32 cores) ├─ Tokio runtime B (32 cores)

│ ├─ Pinned to Socket 0 cores │ ├─ Pinned to Socket 1 cores

│ ├─ NUMA-local memory pool │ ├─ NUMA-local memory pool

│ ├─ Handles Socket 0 NVMe I/O │ ├─ Handles Socket 1 NVMe I/O

│ └─ Local S3 request processing │ └─ Local S3 request processing

│ │

└─ 4 DDR5-6400 channels (local) └─ 4 DDR5-6400 channels (local)

│ │

└──────── UPI (4 links x 24 GT/s) ─────┘

(coordination only, not data)32 NVMe drives, all direct-attach, zero PCIe switches, zero NUMA penalty. The UPI links handle only cluster coordination traffic (heartbeats, placement queries, metadata RPCs), not bulk data I/O.

Contrast this with what a Go storage system would have to settle for:

Single Socket AMD EPYC 9654P (128 PCIe lanes)

├─ 24 NVMe drives (96 lanes) ← maximum without switches

├─ 2x 100GbE NIC (32 lanes)

└─ 0 remaining lanes

OR

├─ 32 NVMe drives (128 lanes) ← requires stealing NIC lanes or adding switches

├─ Networking through PCIe switch (added latency + cost)The Rust system serves 33% more drives at full bandwidth with no switches, while the Go system either caps at 24 drives or adds switches that degrade every I/O operation.

Looking Forward: The Lane Arms Race

Intel’s leaked Nova Lake platform (expected late 2026) introduces the LGA1954 socket with a new 900-series chipset providing up to 48 additional PCIe lanes from the chipset alone. Combined with CPU-direct lanes, dual-socket Nova Lake systems could push past 200 usable PCIe 5.0 lanes, enough for 48+ direct-attach NVMe drives.

AMD’s roadmap continues to prioritize single-socket density (128-160 lanes), but the next generation of CXL-enabled memory tiering will introduce new NUMA-like topologies where memory can be attached via CXL to either socket, further widening the gap between NUMA-aware and NUMA-oblivious software.

The trend is clear: hardware is providing more PCIe lanes, more NUMA nodes, and more complex memory topologies. Software that can’t exploit this hardware leaves performance, and drive density, on the table.

The Bottom Line

The NVMe density problem is a hardware problem that demands a software solution:

- More NVMe drives per chassis require more PCIe lanes than any single socket provides

- PCIe switches fill the gap but add 700ns+ latency and up to 30% bandwidth degradation

- Dual-socket CPUs (especially Intel Xeon 6760P with 176 total PCIe 5.0 lanes) provide enough lanes for 32+ direct-attach drives

- But dual-socket means NUMA, and NUMA requires software that can pin threads, localize memory, and avoid cross-socket traffic

- Go can’t do this. Its scheduler, allocator, and GC are structurally NUMA-unaware, with a decade-old proposal to fix it that was never implemented

- Rust can. Thread pinning, NUMA-local allocation, no GC, and deterministic memory lifecycle make dual-socket zero-penalty

The Go storage ecosystem’s retreat to single-socket AMD wasn’t a preference; it was a concession. A concession that limits NVMe density, forces reliance on PCIe switches, and leaves 33-50% of potential drive slots unusable.

As NVMe capacities grow and drive counts per chassis climb, the storage software that can exploit dual-socket NUMA hardware without penalty will deliver more capacity, more bandwidth, and lower latency per rack unit than any Go-based alternative. Not because of language speed, but because of hardware utilization that Go’s runtime model structurally prevents.

Intel Xeon 6760P specifications from Intel ARK. AMD EPYC lane allocation behavior documented in AMD’s EPYC 9004 architecture overview. PCIe switch latency measurements from Broadcom documentation and USENIX NSDI 2024. NUMA latency figures from Intel VTune documentation. Go NUMA scheduler proposal from Dmitry Vyukov’s design document. Intel Nova Lake platform details from Tom’s Hardware. Rust NUMA thread pinning via core_affinity crate and tokio affinity guide.